This is a continuation of a previous blog featuring a critical look on current developments in the proptech industry, sparked by a recent visit to the Future: PropTech event.

If I was ever going to shoot a futuristic movie, it would probably be called something along the lines of “Rise of the Platforms” (there goes any hope I may have ever held out for a successful movie making career, I know). The trend in the proptech industry is definitely to try to cover an increasing number of use cases and data domains, and platforms are the traditional “delivery mode” for this.

Can I haz a platform for that?

Speaking from first-hand experience, customers exercise a constant “pull” to go broader, offer more features, help them future-proof their operations and respective client offerings, all the while playing nicely with others. And all of this makes sense. But trying to be all things to all people has never been a good idea (unless you’re Amazon or Google, but that’s a different story again; and they definitely did not start out that way but focused on “owning” one vertical first and expanding later).

While the exact path forward is unclear, there are some known-knowns, like:

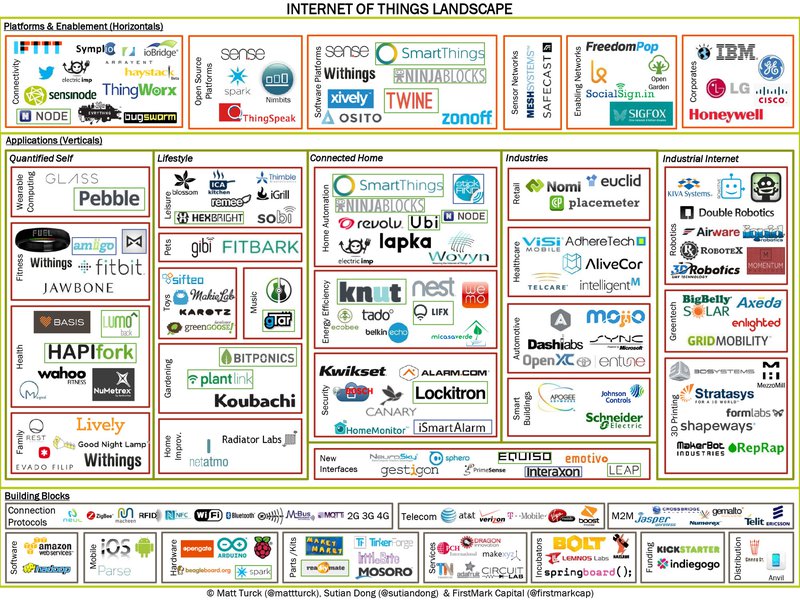

- The world definitely doesn’t yet another IoT platform; there are already a mind-boggling 1,000 IoT platforms and counting, thank you very much

- Highly vertically focused solutions like energy management are never going to grow beyond a relatively niche audience (alas, and no offence to the energy power users out there - even if there may be fewer than IoT platforms).

But where does one draw the line between a laser-focused offering that meets a highly specific use case which may not be highly scalable, and a broad-based platform for potentially all operational building data, with apps to serve some users and interfaces to enable innovation by other providers off of the same datasets?

If I had the answer to that, I am sure Softbank would come knocking with a $200M check. Just to get things started. In the meantime, we’ll have to figure things out, literally iteration by iteration, while pursuing the greater goal of a holistic (ouch) data platform for the built environment. But the overall direction of travel is clear. Building more features is not the issue, but development velocity is of course a trade-off. However, the key challenge is finding buyers (i.e., audiences) for what you’re building, even if it’s a little bit out there and goes beyond what’s typically specified in RfPs, and the roles for what you’re building have yet to be created within corporates. There are a few more Chief Data Offices nowt than there were only a few years ago, which is a good start. But where’s the Chief Platform Officer when you need them?! You get the idea.

After all, the prize isn’t more platforms, nor features or apps; but data. And whoever achieves critical mass in that first (likely for specific use cases), will have an interesting journey ahead and be able to leverage network effects to drive further growth at scale through partnerships and exponential value-add.

That sounded very pitchy right now, apologies. But if you’ve been around the block a few times, you’ll know what I mean and it’ll make perfect sense.

Data, data, and more data (at least as per mentions in Powerpoint presentations...)

For a start, can everyone PLEASE stop talking about “big data in real estate”. Now, and for at least a few more years, and outside of a few very specific use cases. Kthxbye!

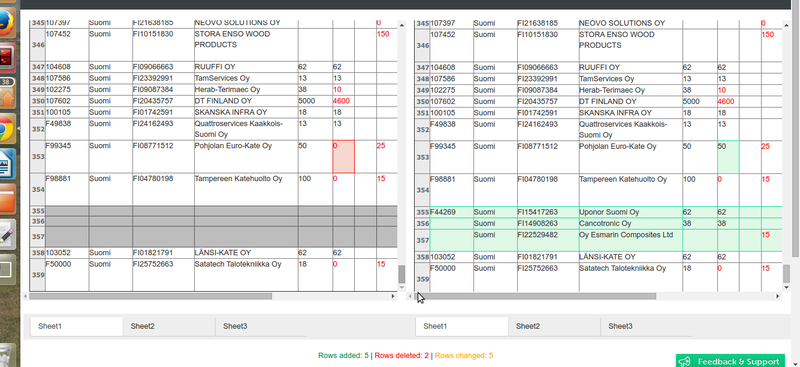

I hate to break it to y’all, but there is virtually NO true big data available in real estate today. Unless you define big data as “a spreadsheet that doesn’t fit on a single screen”, as someone before me once said about data in building energy management...

Big data is typically defined as at least terabyte scale, but more often petabyte. Terabyte is not an order of magnitude that’s hard to manage today even by basic platforms, and the leaders in the space - notably the consumer internet companies - easily manage many exabytes and increasingly zetabytes (yes, that’s a thing) of data. Not only that, but it’s also replicated across multiple data centres globally and available with single to double-digit milliseconds of latency. Crazy stuff.

Compare to that real estate, where you’re happy if you get your hands on a few MB (that’s megabytes) of data, and if you’re super lucky, you’re in gigabyte territory. The reality is that there are very few high-frequency data sources in buildings to start with, and the data they generate is typically single-dimensional, highly structured and can be compressed easily. Apart from it being typically provided in CSV format via FTP (not even SFTP, mind), but that’s another problem.

Sure, there’s an increasing number of sensors being installed that generate sub-minute level data with minute-level data backhauls (4G connection allowing). And some individual buildings have as many as 30K sensors and more - cue my all-time favourite if somewhat over-referenced The Edge in Amsterdam (no offence - I was just hoping there would be many more buildings like it now). But it’s still a far cry from “big data”. So, just stop. Thanks.

If you don’t believe me, I’d like to defer to someone who’s done this for a little longer than myself (yes, we’re that old): David Gerster, a newly appointed VP at JLL Spark, and self-proclaimed “data guy” who’s got the scar tissue to prove it. When asked about the biggest difference between his previous roles at large-scale consumer companies like Yahoo, Groupon etc and Commercial Real Estate, he had a key insight to share: data! Or rather, the lack of it. He also mentioned that the data that you do get hold of is often of questionable quality.

Welcome to our world, then, and the customer issues we routinely spend 80% of our support time on. Including but not limited to: how to get data in the first place, how to fix bad data, and most of all data sources that keep doing unexpected things like dropping out without warning, or sending readings that are at least an order of magnitude off of the expected values. Luckily, we have ways of handling this in the platform and beyond, but the argument holds. Even for large and complex sites like major international airports and commercial office portfolios.

Yes, data is certainly the “engine room” for innovation in real estate (as per our friend at Softbank).

But it will be some time before we see data in commercial real estate (CRE) even approaching the levels we have seen for more than a decade in the consumer internet or even “just” the retail (as in, e-commerce) domains. And maybe CRE will always be at least 10 years behind, until the spheres completely merge one day.

In the meantime, let’s focus on what we can do about this and what we can do with the data that we do capture and find to be reliable.

So what (‘s next)?

To sum it all up: I’m pretty sure I’ve not seen the future… yet!

The answer to what’s going to have to happen is simple: bringing it all together! Customers clearly want end-to-end, turnkey, and everything-as-a-service. That’s a known-known today.

So will there be one app (for reference to the KILLER app phenomenon, see here) or platform to rule them all? Is that even possible? And if so, who’s best placed to deliver this in the real estate / proptech value chain? Or will Google just sweep it all up, once there’s a single connector to all the buildings in the world?

Maybe a good way to wrap up is with the actual theme of the FUTURE: Proptech event: Open Collaboration. There is definitely a lot of mileage in this concept. No-one, not even IBM or Google or Fabriq (see what I’ve done here?!), will be able to build the one platform that does everything, across all use cases and layers of the stack.

But ultimately the value for the end customer, from tenants to service providers and landlords / investors, is from combining different applications and datasets and deriving insights and optimisation opportunities that we can’t even imagine today and that directly impacts the bottom-line of a large number of organisations in meaningful ways.

Yet at the same time, pretty much everyone wants to be the very platform that pulls it all together. Here at Fabriq, that’s definitely our bias - by design if you will - and we’re no exception to that. It will be interesting to see what the most scalable angle is… e.g. work “upwards” from energy and resource management towards tenant apps, “sideways” from asset management systems to also encompass other metrics, or “downwards” from said tenant apps to include other operational metrics and use cases. And how do we manage those partnerships we will need to make it happen? Is it really all going to be dealt with by APIs on a technical level? Will the plethora of bus-level protocols (Bacnet, Modbus etc), data transmission standards and formats that we’re all struggling with today be replaced by 100s if not 1000s of APIs 9one per platform / app), each with its own feature set and documentation? Or is there a smarter way of making integrations and data exchange at scale happen in this sector? Will every end user also have their own data lake (or swamp…) on top of the plethora of platforms and apps they already use? Or will there be a more streamlined approach that means that the smart people in the room (data analysts, industry experts, behavioural scientists) don’t have to spend most of their time figuring out how to get hold of the data they need to do their job properly?

Now that is a question for another post. There has to be one. Always.

Ad astra per aspera! Always so fitting.